Updated: April 3rd, 2026 - detail added on how the account was initially compromised.

--

When a widely used package is compromised, most teams follow a familiar path: they review the diff, identify the malicious version, and check whether it was pulled into their environment. That response is necessary, but it only answers part of the problem.

Axios is a widely used HTTP client in the npm ecosystem. It sits deep in modern application stacks, embedded across developer tooling, backend services, frontend frameworks, and CI pipelines. Installs don’t happen in one place; they happen continuously across workstations, build systems, and production environments. In many cases, fresh dependencies are resolved automatically during deployment or scaling events, which means a compromised version can move well beyond developer machines.

During the exposure window, any environment resolving the affected versions executed attacker-controlled code. That is the part that should shape the response. The issue is not the version number or the dependency diff, but the fact that untrusted code ran inside systems that already held privileged access.

What actually happened

A maintainer account for axios was compromised, allowing malicious versions to be published and tagged so that standard installs would resolve to them.

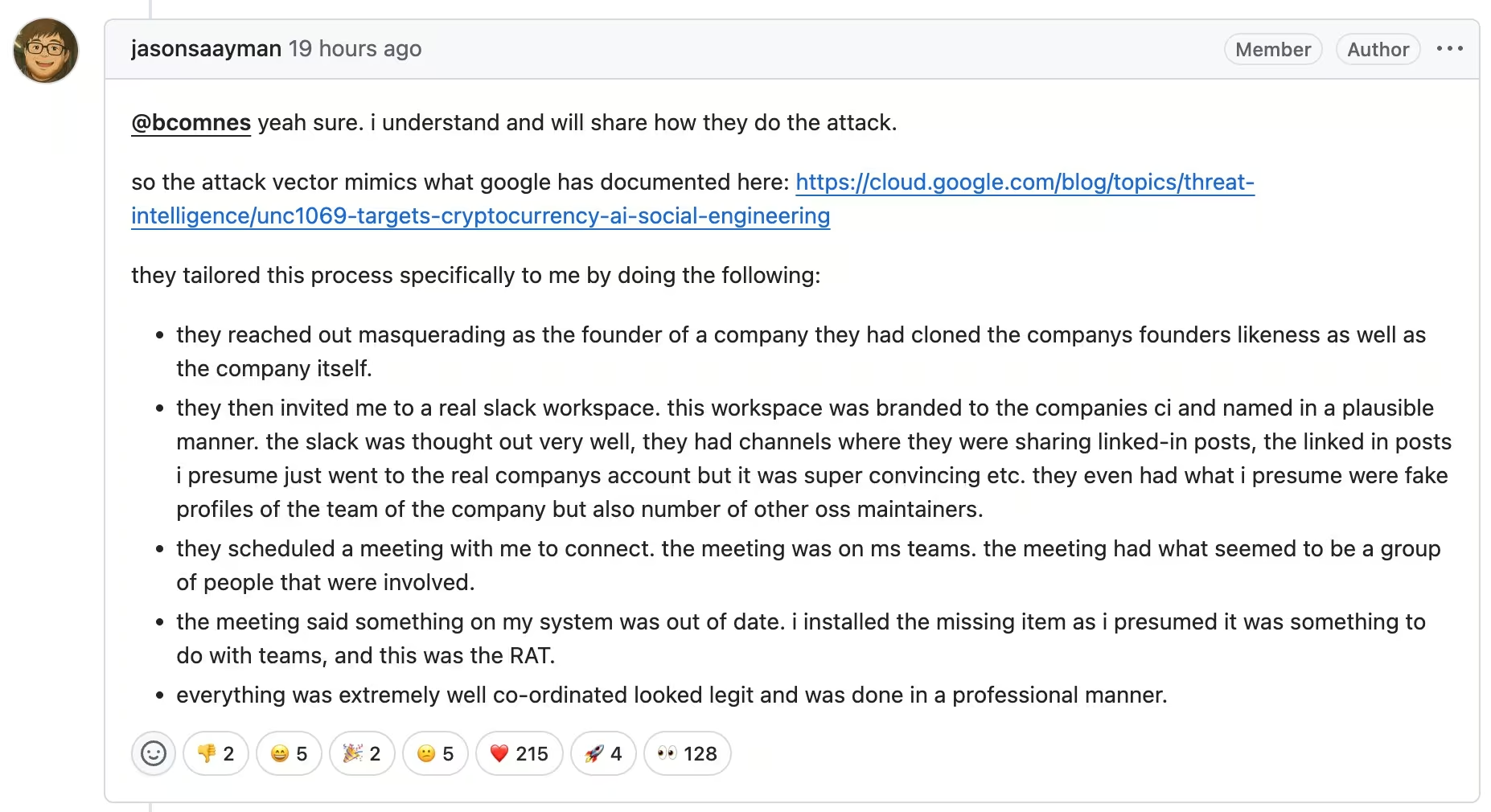

Subsequent details from the maintainer point to a targeted social engineering operation rather than a simple credential leak. The attacker impersonated a legitimate company, built a convincing Slack workspace with cloned branding and profiles of known engineers, and arranged a live meeting over Microsoft Teams. During that interaction, the maintainer was prompted to install what appeared to be a routine update, which in reality deployed the malware used to gain access to their environment.

From there, the attacker used that access to publish backdoored versions of axios directly to npm, bypassing the project’s normal release process.

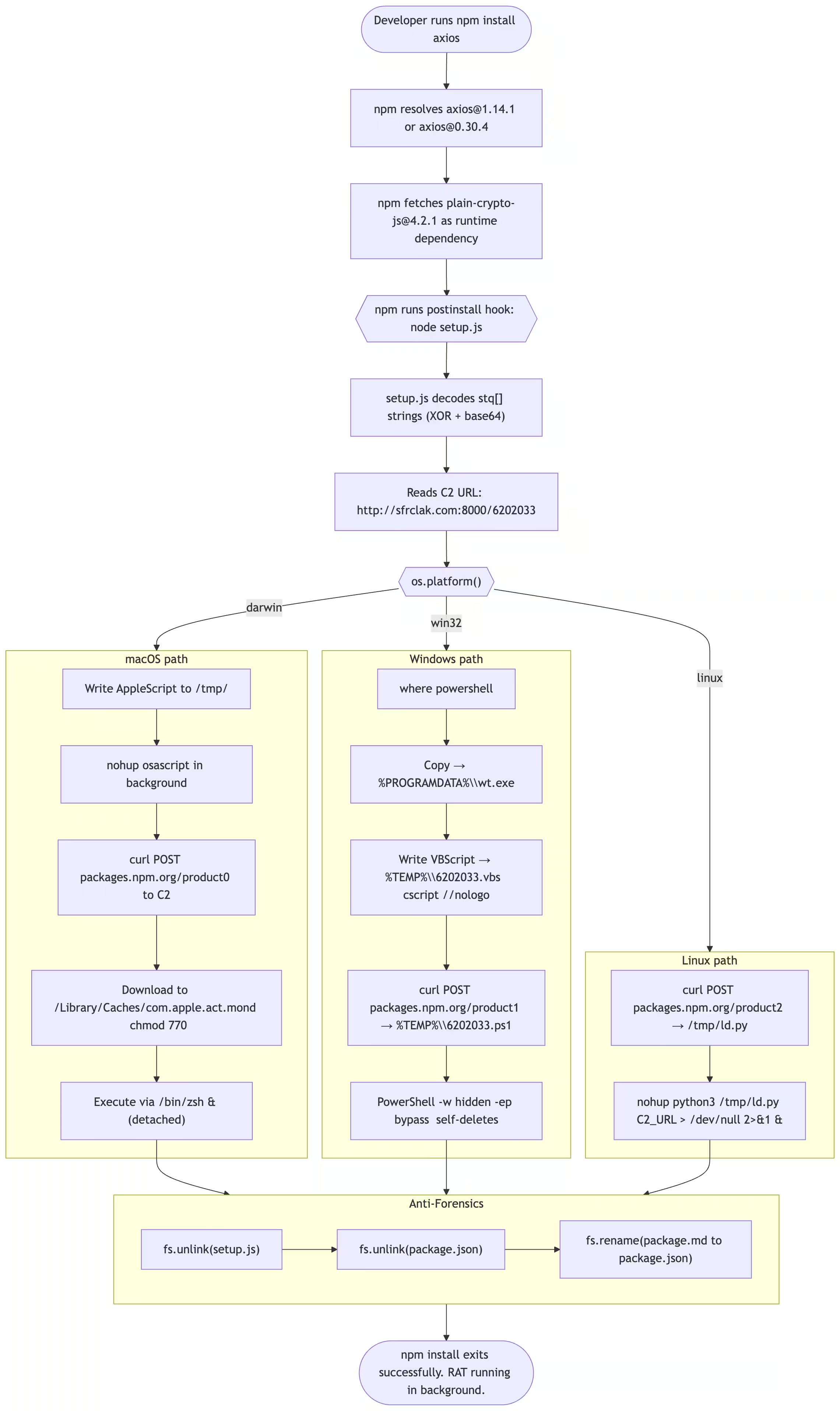

The change itself was minimal. A single dependency, plain-crypto-js, was added to the package, but it was never referenced anywhere in the codebase because it did not need to be. Its purpose was execution, not functionality.

That dependency introduced a postinstall hook, which ran automatically during the normal npm install process. There was no prompt, no warning, and no indication that anything had gone wrong. Instead of containing the final payload, the script acted as a dropper.

As soon as it executed, it reached out to attacker-controlled infrastructure and sent a request designed to resemble normal npm-related traffic. The request body mimicked legitimate registry communication, but the destination was external. The response delivered a platform-specific payload tailored to macOS, Windows, or Linux, which was then written to disk and executed in the background.

At that point, the objective had already been achieved. A remote access tool was running on the system, detached from the installation process.

The dropper then removed its own traces. The installer script was deleted, and the package metadata was rewritten to appear clean. Anyone inspecting the dependency afterward would see nothing obviously malicious. The installation would look legitimate, even though the payload had already executed.

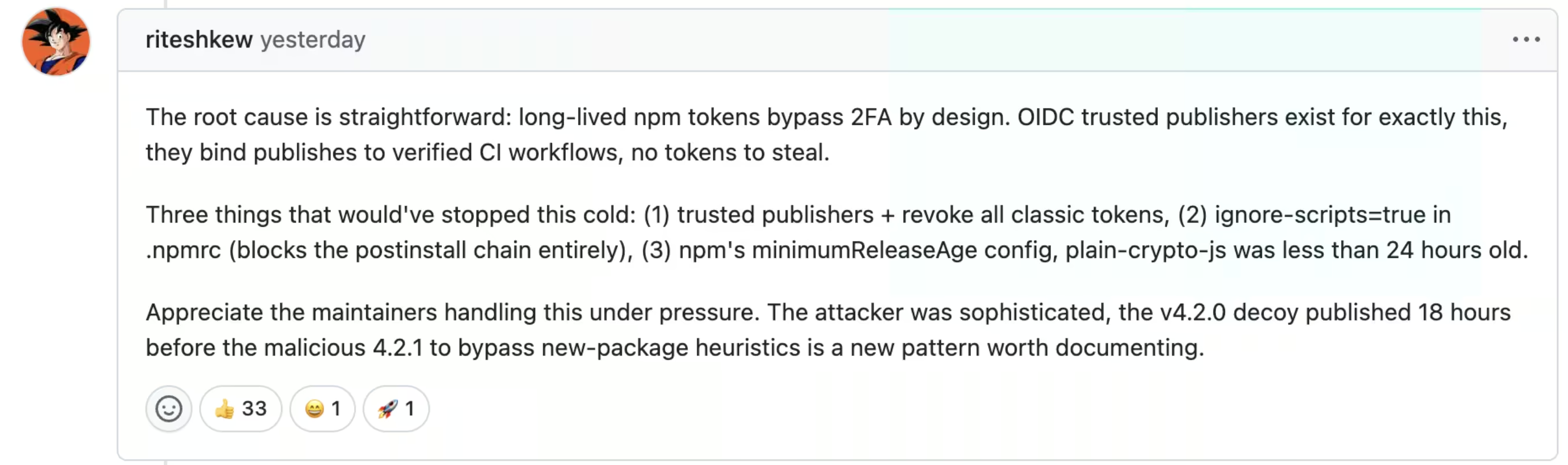

What makes this incident more relevant is that it did not rely on an obviously weak setup. The maintainer had multi-factor authentication in place and had begun moving toward trusted publishing using OIDC. However, legacy publishing paths still relied on long-lived npm tokens, which bypass MFA by design.

That coexistence created the gap. The stronger control was present, but not enforced end to end. The attacker did not need to break the intended publishing workflow. They only needed to use the path that was still available.

Early discussion from the maintainer and the community quickly converged on the same pattern: strong controls were in place, but legacy authentication paths still created exposure.

comment from user Riteshkew on GitHub

This is the pattern that repeats across supply chain incidents. The compromise happens through identity, the payload is delivered through trusted distribution, and the execution blends into normal behavior. By the time the change is noticed, the code has already run.

Source: StepSecurity

The outbound requests shown here are directed to attacker-controlled infrastructure, not the npm registry. The request body mimics npm-related traffic, which helps the activity blend into normal development workflows while retrieving the second-stage payload.

Why this blends into normal workflows

Poisoned packages are not new, but this case stands out because of how cleanly it aligns with normal development workflows and how it combines targeted access with broad distribution.

As discussed previously, the exploit itself rarely defines the outcome. What matters is what happens after code execution inside the environment.

Several factors make this incident more consequential than typical supply chain compromises.

Axios is widely embedded, which expands the potential blast radius far beyond a single application or team. The exposure was not limited to organizations that explicitly depended on it. Any package, build workflow, or CI job resolving it transitively during the window could have pulled the malicious release, making it difficult to quickly determine the full scope of impact.

Execution was immediate. The payload ran within seconds of installation, often through automated pipelines. Huntress observed the first known endpoint infection just 89 seconds after the malicious version was published, which reflects how quickly modern development environments resolve and execute new dependencies.

At the same time, the attacker took steps to minimize visibility. The installer removed itself, the package metadata was rewritten, and post-incident checks could suggest nothing was wrong. The modification itself was precise: one dependency added, no functional changes, and no obvious signal unless the right file was examined closely.

What also sets this case apart is how it began. The compromise did not originate in the package ecosystem itself, but with a targeted social engineering campaign against the maintainer. By operating through trust rather than exploiting a technical vulnerability, the attacker bypassed controls such as MFA and gained direct access to the release process.

That combination of targeted identity compromise and software supply chain distribution is what makes this type of attack difficult to anticipate and harder to contain.

From package install to credential exposure

The installation is only the entry point. Once the dropper executes, it inherits the permissions and access of the system it runs on.

In practice, that often includes GitHub tokens, npm tokens, cloud credentials, CI/CD secrets, API keys, and SSH material. None of these need to be exploited because they are already available within trusted environments such as developer workstations and build pipelines.

At that stage, the attacker no longer depends on the package itself. The focus shifts to what those systems can access and how that access can be reused.

We have already seen how this pattern develops. In the Shai-Hulud case, the attack moved quickly from execution to credential harvesting and reuse, spreading through repositories and pipelines by leveraging existing trust relationships.

The package acts as the delivery mechanism. The risk comes from what that delivery enables.

This pattern is not limited to npm packages. In the recent Trivy supply chain incident, attackers used compromised CI/CD tooling to execute code directly inside build pipelines, harvesting cloud credentials, Kubernetes secrets, and API tokens at scale. Different entry point, same outcome: execution inside a trusted environment, followed by immediate access to everything that environment can reach.

The visibility gap after the install

This is where most organizations lose clarity.

It is relatively straightforward to confirm whether a malicious version appears in a lockfile, but that does not indicate where the code actually executed. Build logs rarely capture the behavior of detached background processes, and developer machines often operate outside the same level of monitoring applied to production systems.

By the time the malicious dependency is identified and removed, the initial execution has already occurred, leaving teams with limited evidence and a set of unanswered questions.

Did the payload access credentials?

Were those credentials reused?

Did activity extend into cloud or SaaS environments?

In many cases, there is no definitive way to answer these questions using traditional tooling alone.

A practical way to validate exposure

For teams trying to move from “we might be affected” to something actionable, one of the fastest signals to check is outbound communication.

We published a dedicated 5-minute hunt in the Vectra AI Platform to identify systems that may have executed the malicious axios payload by looking for communication with the attacker infrastructure.

This hunt focuses on a small set of high-confidence indicators tied to the campaign, including the command-and-control domain sfrclak.com and the associated IP address 142.11.206.73. Any system communicating with that infrastructure during or after the exposure window should be treated as suspicious.

The query surfaces network sessions involving that domain or IP, along with the source and destination hosts, protocol, and connection frequency. In practice, analysts should pay attention to systems that show repeated or automated connections, or hosts that have no prior history of communicating with similar external infrastructure.

From there, the investigation can move quickly. Pivot into DNS, HTTP, and SSL telemetry to understand the scope of communication, then correlate with endpoint data to identify the process responsible. If activity is confirmed, block the infrastructure and isolate the affected system for remediation.

This type of targeted hunt does not replace broader investigation, but it gives teams a fast way to identify likely compromised systems and prioritize response. In an incident where execution happens silently and evidence is limited, that initial signal can significantly reduce the time it takes to understand what actually ran in your environment.

Supply chain attacks pivot to identity

Once access is obtained, the attack no longer depends on malware persistence. Valid credentials provide a more reliable and less detectable path forward.

Attackers can authenticate, call APIs, and interact with systems using the same interfaces and workflows that developers and automation rely on every day. This allows them to blend into normal activity while extending their reach across environments.

The Shai-Hulud example illustrated how this works, using stolen tokens to create repositories, modify pipelines, and expand through existing trust relationships without introducing obvious anomalies at the individual event level.

The axios incident creates the same opportunity. A service account accessing resources it has never used before, a token appearing in a new context, or a pipeline behaving differently than expected are all individually explainable events. When viewed together, they form a pattern that points to misuse of access rather than normal operation.

Detecting what happens after the compromise

Once code execution has occurred, the challenge shifts from prevention to understanding how access is being used.

One of the earliest signals in this attack chain is outbound communication to command-and-control infrastructure. Even when the dropper removes its own artifacts, that network activity remains. Unusual external connections from developer machines, CI runners, or application environments can provide a clear indication that something is wrong, especially when the destination does not align with expected dependency or build behavior.

The Vectra AI Platform focuses on identifying these patterns across identity systems, cloud and SaaS environments, and network activity. It surfaces authentication behavior that does not match established usage, highlights access patterns that drift from expected workload behavior, and detects activity that suggests staging, persistence, or lateral movement.

Individually, these signals may not stand out. Correlated together, they reveal whether an incident stopped at execution or progressed into broader compromise.

What you can still trust

The axios incident does not end with the removal of a malicious package. It marks the point where certainty gives way to risk assessment.

Most teams can identify whether the affected versions were present. Fewer can determine where they executed or what those environments exposed at the time. That distinction matters, because it defines whether the incident was limited or whether it created lasting access.

If there is any possibility that the compromised versions were executed, the safest assumption is that the environment should no longer be trusted in its previous state. Developer machines, CI runners, and build systems often hold more access than intended, and that access is rarely fully mapped.

The initial response remains straightforward: pin to a clean version, remove the dependency, and rebuild affected systems rather than attempting partial cleanup. The more difficult step is deciding what can still be trusted afterward.

Credentials associated with those environments should be treated as exposed, not because there is proof of misuse, but because there is no reliable way to prove the opposite. Rotation becomes necessary to re-establish trust.

This incident also highlights structural issues in how dependencies are handled. CI/CD pipelines that automatically resolve the latest available versions create a direct path for newly published malicious packages to be executed immediately. Introducing a delay between publication and adoption, along with stricter version pinning, reduces exposure by allowing time for issues to surface before deployment.

At the same time, the root cause remains identity compromise. Maintainer and deployment accounts should be treated as high-value assets, with clear separation between day-to-day development access and release privileges. Reducing reliance on long-lived tokens and enforcing stronger controls around publishing workflows limits the impact of this type of attack.

The broader takeaway is consistent across supply chain incidents. The entry point may be a compromised dependency, but the impact is defined by how access is used afterward. The axios compromise was brief, but the conditions it created may persist well beyond the installation window.